Compression Language

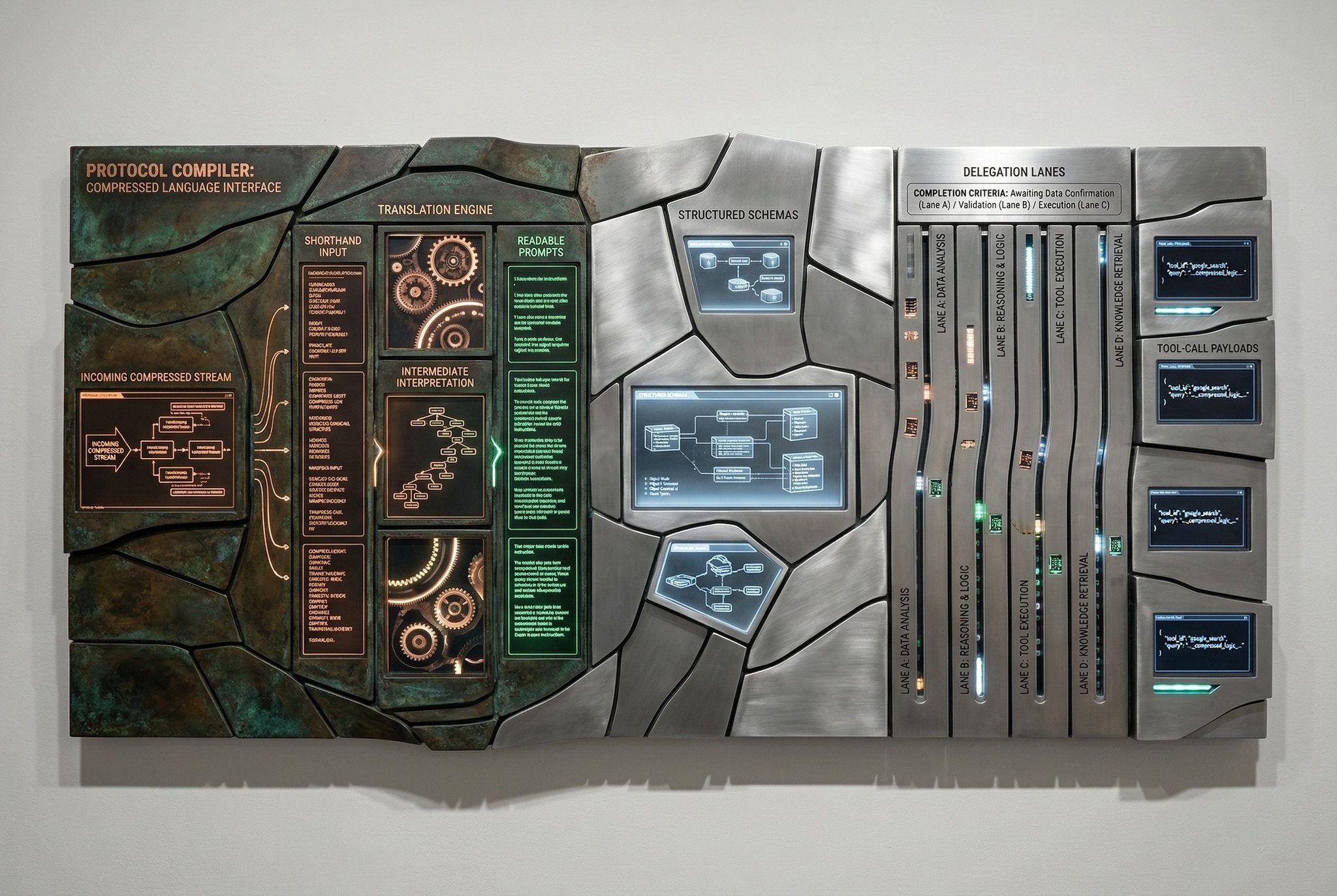

A purpose-built human-AI protocol can lower ambiguity per token by compressing intent, constraints, uncertainty, and output structure into a compact shared language.

Human-AI communication still relies on improvised natural language: flexible, familiar, and structurally inefficient.

Premise

- The same intent is often restated across sessions, tools, and models.

- Structure remains implicit, so ambiguity survives even long prompts.

- A compact protocol layer can raise signal density without abandoning ordinary language.

Mechanism

- Core Grammar

- Encode task type, objective, constraints, priorities, uncertainty, and desired output in a compact syntax.

- Example Syntax

task:delegate | role:research-agent | goal:competitor-brief | constraints:time<2h,budget=low | tools:web,docs | uncertainty:med | output:checklist

- Domain Module

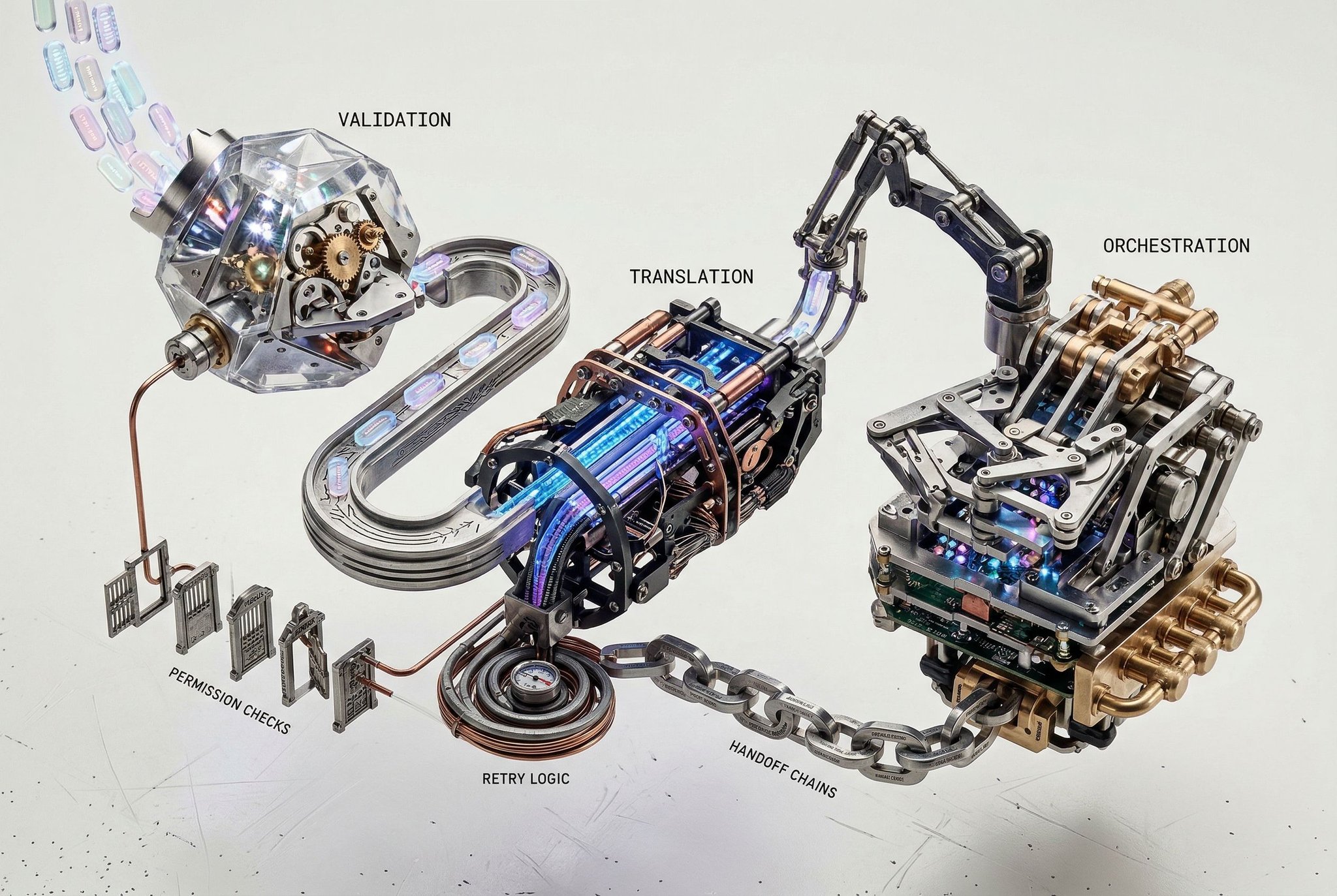

- Start with agent orchestration: delegation, tool permissions, retry rules, completion criteria, and handoff structure are repetitive enough to standardize.

- Translation Layer

- Expand shorthand into readable prompts, structured schemas, or tool-call payloads.

- Validation Layer

- Catch missing arguments, permission conflicts, invalid tool access, broken handoff chains, and underspecified completion criteria before execution.

Why This Matters

- Lowers ambiguity per token rather than merely shrinking prompts.

- Makes repeated workflows more consistent across time and teams.

- Creates a shared control surface between human intent and model behavior.

- Because the protocol compiles into explicit structures, delegated work becomes loggable, replayable, and auditable.

First Battlefield

- Initial domain: agent orchestration.

- Why start here:

- Tasks are structured and repetitive.

- Failure costs are visible.

- Intent, constraints, and outputs can be benchmarked.

- The protocol can prove value before tackling open-ended dialogue.

What Must Be Proven

- Does the protocol reduce tokens without increasing cognitive load?

- Does it improve time-to-correct-output versus plain language?

- Can multiple model families parse it reliably?

- Does explicit uncertainty marking reduce downstream errors?

- At what point does compression become harder to learn than the work it saves?

Adoption Mechanics

- The language should emerge through tooling, not doctrine.

- Editors, autocomplete, prompt compilers, browser overlays, and linting matter more than notation alone.

- If memorization cost is front-loaded, adoption fails.

Trade-offs

- More structured than freeform prompting, but less natural for casual interaction.

- Better for repeatable work than exploratory conversation.

- Compact syntax can create false precision unless uncertainty and context gaps remain explicit.

- Cross-model robustness may force the grammar to be less elegant than a single-model-optimized design.

- Without versioning and schema compatibility rules, teams may fork the protocol into mutually unintelligible dialects.

Strategic Edge

- The defensible layer is not the shorthand itself.

- It is the compiler that translates compact human intent into validated prompts, schemas, and agent instructions.

- If successful, human-AI interaction shifts from improvised prompting to protocol-driven coordination.

Generation Prompts

Image Prompt A premium human-AI interface visualization showing dense natural-language blocks collapsing into a clean protocol grid with pipe-delimited syntax strings, tagged fields, uncertainty markers, validation warnings, schema panels, compiler output panes, and agent tool-call cards, dark glass UI, luminous white-blue typography, precise information architecture, cinematic lighting, ultra-detailed minimalist cognitive control room aesthetic.

Video Prompt Slow cinematic transition from chaotic scrolling natural-language prompts into a refined compressed protocol interface, pipe-delimited syntax tokens snapping into ordered lanes, validation warnings flashing briefly, schema panels and agent tool-call outputs expanding in sync, dark premium UI atmosphere, crisp volumetric light, restrained futuristic motion graphics.

Constraints & Non-Goals

- -The protocol must augment natural language rather than attempt to replace it entirely.

- -It must remain learnable by humans and reliably parseable across multiple model families.

- -The first deployment should target one narrow, high-value workflow, with agent orchestration as the default first testbed.

- -Compression must expose uncertainty, assumptions, and missing context rather than hiding them behind terse notation.

Feasibility Gradient

The concept is plausible as protocol design, not as a universal new language. Humans already use compact operational notations in mathematics, programming, medicine, and control systems, while AI systems respond well to structured schemas, tags, and constrained syntax. The main risks are overdesign, false precision, and model variance: a notation that saves tokens but increases human effort or parses inconsistently is a regression. The strongest near-term path is a small core grammar with domain modules, translation layers, and tooling that expands shorthand into readable prompts, schemas, and agent instructions while validating ambiguity before execution.

Next Actions

- Define a minimal grammar for intent, constraints, priority, uncertainty, and output format.

- Prototype the first domain module for agent orchestration with delegation, tool permissions, retry rules, and completion criteria.

- Build a compiler that expands compressed notation into readable prompts, structured schemas, and tool-call payloads.

- Benchmark token savings, time-to-correct-output, ambiguity rate, cross-model reliability, and user error recovery against plain prompting.

Restricted Layer

Full grammar design, syntax rules, compiler behavior, benchmark results, linting logic, domain extensions, versioning rules, compatibility policies, observability design, and the IP layer around adaptive shorthand generation and protocol compilers would sit behind the access wall.

Request accessLast updated: March 20, 2026