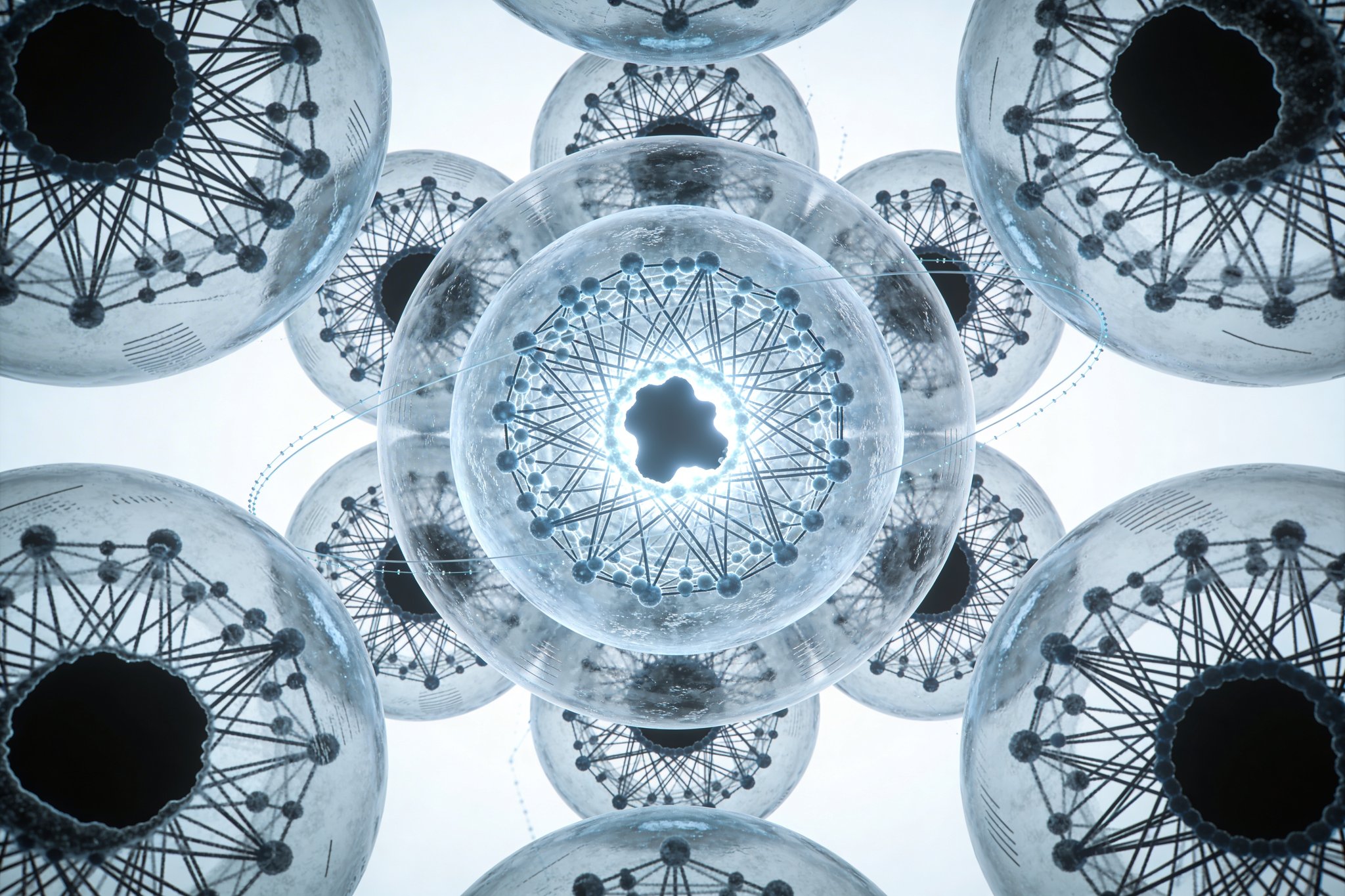

Concentric Neural Sphere

Evaluate whether adjacency-dominant concentric-shell graphs (radial message passing; skip-links budgeted) achieve better accuracy-per-compute and scale generalization on hierarchical spatial tasks than grids/CNN-U-Nets, transformers, and unconstrained GNNs under matched training budgets.

Design Intent: Locality Across Scale, Not Coordinates

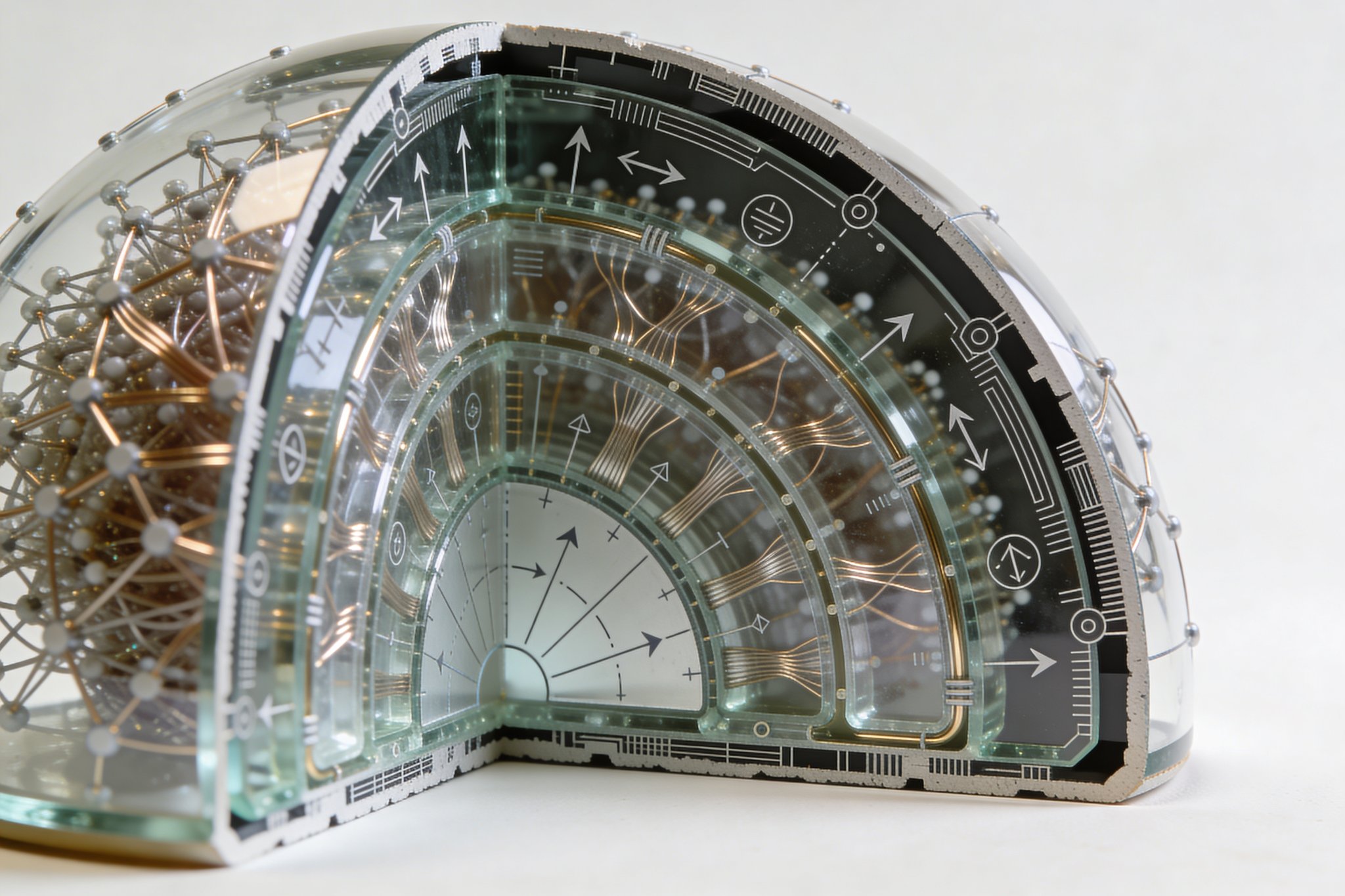

Hierarchical spatial tasks are nested: environments contain regions, regions contain objects, objects contain parts. A grid biases locality in Cartesian coordinates; a transformer biases global mixing. The concentric sphere imposes a third bias: locality across scale bands. The outer shells carry high-detail, high-bandwidth signals; inner shells are forced to form low-bandwidth commitments.

The claim is narrow: for tasks whose causal structure is coarse-to-fine, radial compression with adjacency-dominant wiring can reduce active compute while improving generalization to larger scales.

Substrate Specification: Shell Discretization Must Be Deterministic

A “sphere” is only meaningful if discretization is fixed and reproducible.

- Shells: (S_0 \dots S_k), where (S_0) is peripheral input and (S_k) is the core readout.

- Per-shell node placement: choose one deterministic equal-area method and lock it:

- Fibonacci sphere sampling (simple, stable across resolutions), or

- Icosahedral subdivision (structured, mesh-friendly).

- Lateral neighborhoods: within shell (S_i), define neighbors via k-nearest geodesic distance (k fixed per shell, optionally scaling with shell resolution).

- Topology fingerprints: every run logs the discretization method, random seed, degree distribution per shell, edge length distribution, and wormhole fraction. The topology itself becomes a comparable artifact.

This removes “it worked because of implementation details” as an escape hatch.

Connectivity Contract: Allowed Edges, Budgeted Exceptions

The sphere is not a visual metaphor; it is a constraint that can be violated only at explicit cost.

Default edge rules

- Radial adjacency (primary): edges primarily connect (S_i \leftrightarrow S_{i+1}). This is the architectural invariant.

- Lateral edges (optional): within-shell edges only inside the geodesic k-NN neighborhood to prevent silent densification.

- Wormholes (optional, budgeted): skip edges across non-adjacent shells are permitted only under a hard count budget and/or Pareto penalty.

If wormholes are repeatedly selected by evolution, that is not failure—it is evidence about which task classes demand long-range dependencies and where the constraint breaks.

Computation: Radial Message Passing as Controlled Depth

Computation is expressed as propagation across shells. Depth is not “number of layers” but “number of radial transitions.”

Modes

- Radial feedforward: one outward-to-inward sweep; cheapest and clearest baseline.

- Bidirectional predictive flow (optional): inner shells send priors outward; outer shells return residuals inward. This tests iterative refinement without collapsing into unconstrained recurrence.

- Sparse activation routing (optional): per-input gating activates a subset of edges; compute is measured as E_active, not merely parameter count.

Readout strategy

- Core readout: decisions/actions emitted from (S_k).

- Auxiliary heads: intermediate shells may predict coarse targets (global map hints, object counts, low-res occupancy) to improve credit assignment through the bottleneck. These heads are ablated; they are not allowed to become hidden shortcuts.

Optimization: NEAT-Derived Structure Search, SGD for Weights

Pure neuroevolution over both topology and weights is compute-prohibitive for the scope. The pragmatic stance is hybrid.

Topology search

- Borrow NEAT’s efficiency levers (Stanley & Miikkulainen, 2002):

- Incremental complexification: start minimal (radial adjacency only) and grow.

- Speciation: prevent early extinction of structurally novel candidates.

- Mutations operate on: shell sizes, lateral k, wormhole budget, directionality, gating choices, and (optionally) learned shell membership.

Weight training

- Each candidate receives short-horizon SGD training under a locked schedule.

- Weight inheritance is applied when mutations are small to reduce evaluation variance.

- Multi-fidelity evaluation screens cheaply first (few steps/low-res) before full training.

Fitness and reporting

- Two experimental regimes:

- Hard-budget mode: reject candidates exceeding ((E_{active}\le E_{max},\ \text{hops}\le H_{max})).

- Pareto mode: evolve a trade-off front over task performance vs compute (E_active, hop depth, latency).

- A single deployment point is selected by a fixed policy (e.g., “max accuracy subject to latency < X ms”), not by post-hoc preference.

Benchmarks: Where This Bias Should Win (and Where It Should Lose)

The suite is chosen to separate structural advantage from tuning luck.

Hierarchical spatial reasoning

- Multi-scale mazes: local decisions + global goal inference; hold out larger maze sizes for scale generalization.

- Procedural scene-graph queries: nested entities (room→furniture→parts) with compositional questions; hold out deeper nesting than trained.

- 3D occupancy / SDF: coarse geometry guides fine detail; test generalization to higher resolution volumes.

Embodied control (optional)

- Partial observability settings where peripheral observation is high-dimensional and action is low-dimensional; measure stability under sensor dropout.

Counter-benchmarks

- Tasks intentionally lacking hierarchical spatial structure (e.g., IID classification with no multi-scale benefit). The sphere should not dominate; this guards against narrative-driven interpretation.

Primary metrics

- Sample efficiency, held-out scale generalization.

- Compute: E_active per sample, max-hop depth, and measured latency on a specified hardware target.

- Robustness: noise/occlusion/dropout sensitivity.

Baselines + Search Controls: Prevent False Attribution

Baselines must match both capacity and budget.

Model-family baselines

- CNN/U-Net style spatial models for grid-like tasks.

- Transformers for global mixing.

- Unconstrained GNNs for expressive graph computation.

- MLPs as a minimal control.

Search-method baselines (non-negotiable)

- Random search over the same shell generator parameterization (same budgets, same training).

- A sparsity-first training baseline (dynamic/adaptive sparse connectivity) to test whether gains come from the radial constraint rather than sparsity alone.

The outcome must answer: “Is the sphere doing real work, or is this just sparse training plus luck?”

Ablations: The Scientific Core

Ablations are defined to make it hard to lie.

- adjacency-only vs +lateral edges

- wormhole budget sweep (0 → permissive)

- feedforward vs bidirectional predictive flow

- dense activation vs sparse routing (measure E_active)

- fixed topology vs evolved topology vs random search

- strict shell assignment vs learned/soft shell membership

Every run exports topology fingerprints and a compute report, so comparisons are structural, not just numerical.

Interpretability: Topology Fingerprints as the Artifact

The product is not a single checkpoint; it is a rulebook extracted from evolution.

- recurrent microcircuits at shell boundaries (gating, pooling, arbitration)

- bottleneck archetypes (single core vs multi-core committees)

- degree distributions per shell and how they shift by task class

- wormhole placement statistics (which shell pairs, which functional roles)

- lesion tests: remove shells/edge classes; quantify collapse modes and compute savings

A practical deliverable is a “motif library” a builder can graft into other sparse or hierarchical systems.

Trade-offs and Failure Modes

- Bottleneck brittleness: strict adjacency can block long-range dependencies; wormhole frequency quantifies the minimum long-range requirement.

- Iterative refinement vs compute advantage: bidirectional flow may improve accuracy but erase latency gains; Pareto reporting prevents cherry-picking.

- Generator dependence: discretization choice can silently alter inductive bias; locking and logging discretization is mandatory.

- Search variance: multi-fidelity evaluation and weight inheritance reduce noise, but negative results are still valid if boundaries are sharp.

Decision-Grade Success Criteria

Any of the following is a “ship” outcome:

- A Pareto regime where the sphere matches/exceeds baseline accuracy at lower E_active/latency and better scale generalization.

- A negative result with clear boundaries: which task families break adjacency and the quantified wormhole requirement.

- Transferable motifs that survive grafting into non-spherical sparse architectures.

Generation Prompts

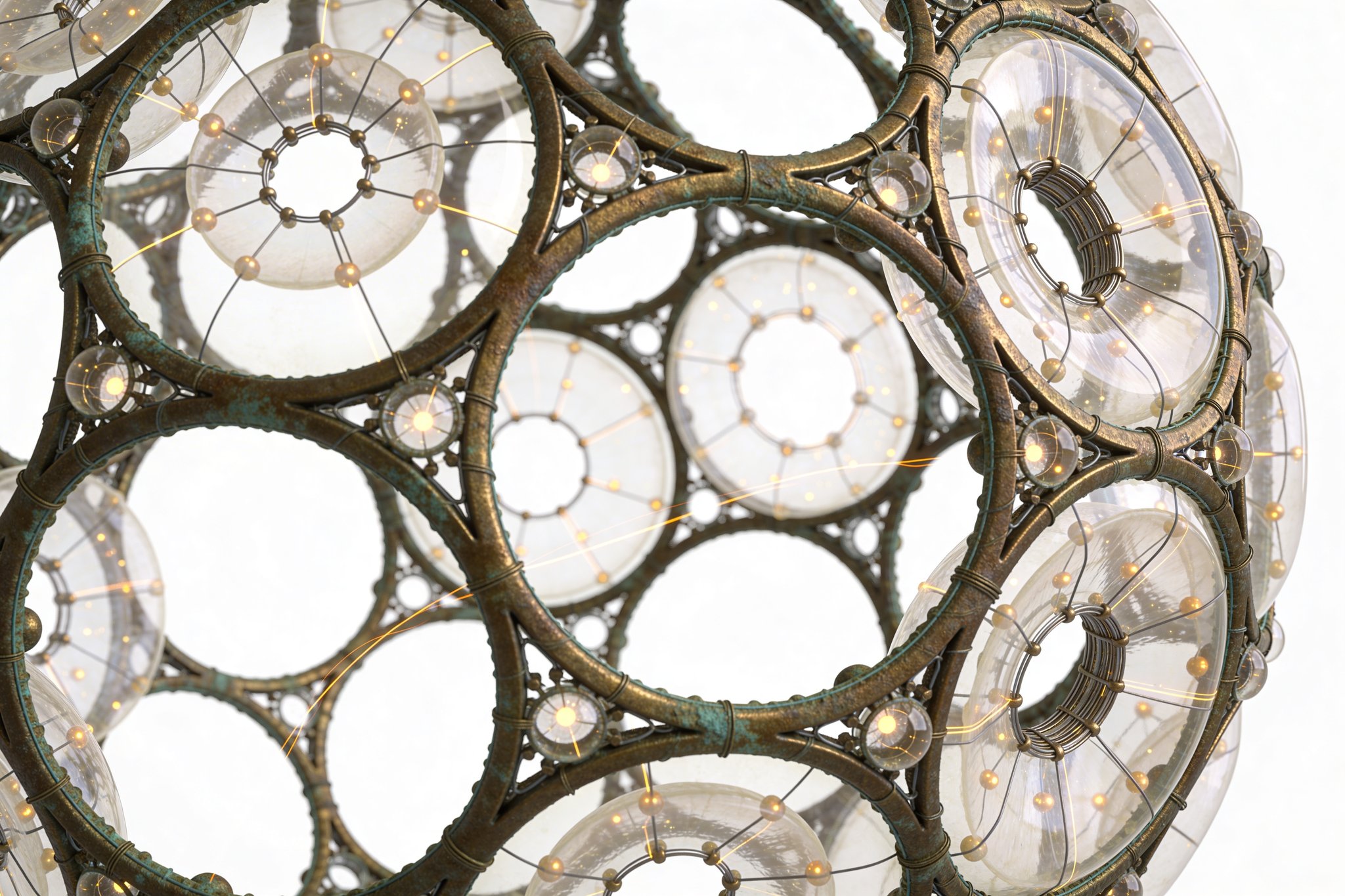

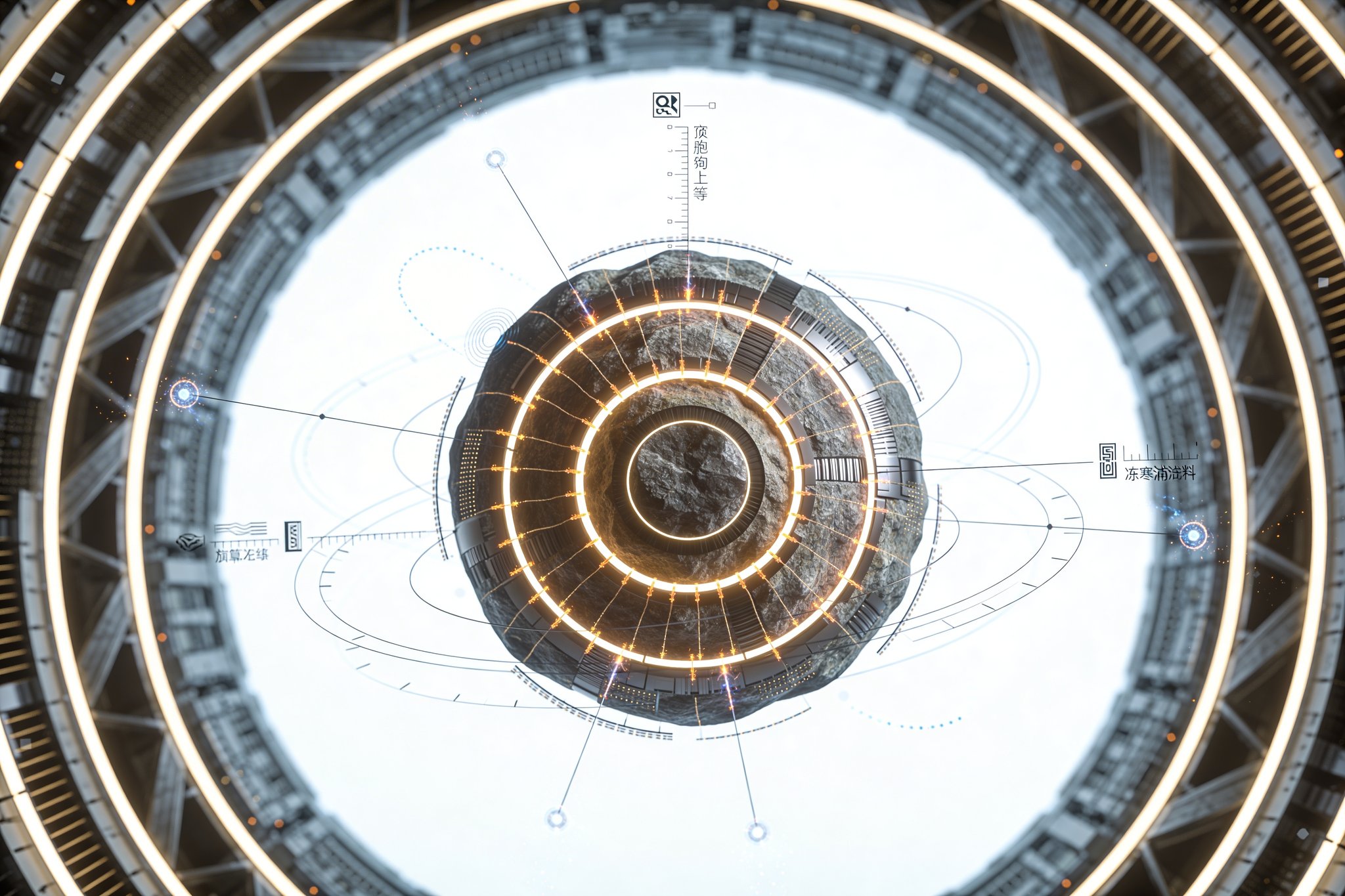

Image Prompt High-contrast scientific visualization of a concentric neural sphere: 6 translucent nested shells on a matte off-white infinity background, thousands of small emissive nodes, adjacency-dominant fiber bundles linking neighboring shells, sparse faint wormhole arcs crossing multiple shells, radial vector arrows indicating outer-to-inner propagation, crisp volumetric lighting, no depth-of-field, research-diagram precision, ultra-detailed 8k render.

Video Prompt 12–15 second slow orbital camera around a translucent concentric neural sphere on a matte off-white infinity background; pulses of light propagate from the outer shell inward in discrete waves, adjacency fibers ignite sequentially and converge into a bright core decision cluster; rare wormhole flashes arc across shells as brief highlights. Clean lab lighting, crisp focus across the full object, no depth-of-field, restrained timing.

3D Model Prompt Watertight 3D model of a concentric neural sphere with 6 nested shells, each shell a thin geodesic lattice populated by small node beads; connection fibers are separate spline meshes primarily linking adjacent shells with a configurable sparse set of long wormhole fibers. Materials: translucent shell glass, emissive nodes, satin-finish fibers. Organized hierarchy, consistent scale, optimized topology for real-time rendering.

Constraints & Non-Goals

- —Connectivity is adjacency-dominant between neighboring shells, with any skip links (“wormholes”) explicitly budgeted and penalized.

- —Success is defined by comparative benchmark performance under matched training budgets, not biological analogy.

- —The project must ship as a repeatable framework: topology generator, optimizer recipe, ablation suite, and topology interpretability reports.

- —Compute is reported as E_active per sample, max-hop depth, and wall-clock latency on target hardware; experiments run in hard-budget and Pareto modes.

Feasibility Gradient

The substrate is implementable today as a shell-partitioned graph with message passing and optional sparse routing/gating; topology search can borrow NEAT’s efficiency levers (Stanley & Miikkulainen, 2002)—incremental complexification from a minimal graph and speciation to protect structural innovation—while using SGD for short-horizon weight training with weight inheritance after small mutations; the main risk is evaluation variance and cost in joint structure+weight search, mitigated by multi-fidelity screening, strict compute accounting, and search-method baselines (random search and sparsity-first training controls) to avoid expensive inconclusive results.

Next Actions

- Specify and lock shell discretization (Fibonacci sphere or icosahedral subdivision), lateral k-NN geodesic rule, and deterministic seeding; ship the generator with topology fingerprint export.

- Implement an evolutionary loop with speciation + minimal-start complexification and weight inheritance; add multi-fidelity evaluation (cheap proxy → full train) with consistent budgets.

- Lock benchmarks and baselines, including search controls (random search over the same generator parameters) and a sparsity-first training baseline; publish a single evaluation protocol.

- Run a Pareto study over wormhole budgets, lateral k, bidirectionality, and routing sparsity; report accuracy vs E_active/latency curves and select deployment points via a fixed policy.

Interactive 3D Model

Restricted Layer

Restricted materials would include exact discretization and edge-construction formulas, tuned speciation/mutation schedules and inheritance heuristics, multi-fidelity proxy models, procedural benchmark generators and the full evaluation harness, ArX-assisted motif mining to classify evolved microcircuits, and a commercialization matrix mapping Pareto points to deployment targets and integration notes.

Request accessLast updated: February 23, 2026