Plasticity Engine

Build a production-grade continual-learning control stack where online adaptation is bounded to reversible modules (routers/adapters/memory) and promoted only through signed artifacts and non-regression gates, enabling multi-week improvement from real user feedback without silent regressions.

Objective (first principles)

Deployed systems face the stability–plasticity dilemma: environments shift while expectations remain fixed. Users change, tasks drift, tools evolve, and UI changes create new failure modes. A static model decays because its competence is measured against yesterday’s distribution.

Unconstrained learning in production is operational debt: silent regressions, biased feedback amplification, and post-hoc irreproducibility. Plasticity Engine treats adaptation as change management: maximize long-horizon utility subject to explicit non-regression invariants, safety constraints, and reversible deployment primitives.

Biological inspiration → engineering translation

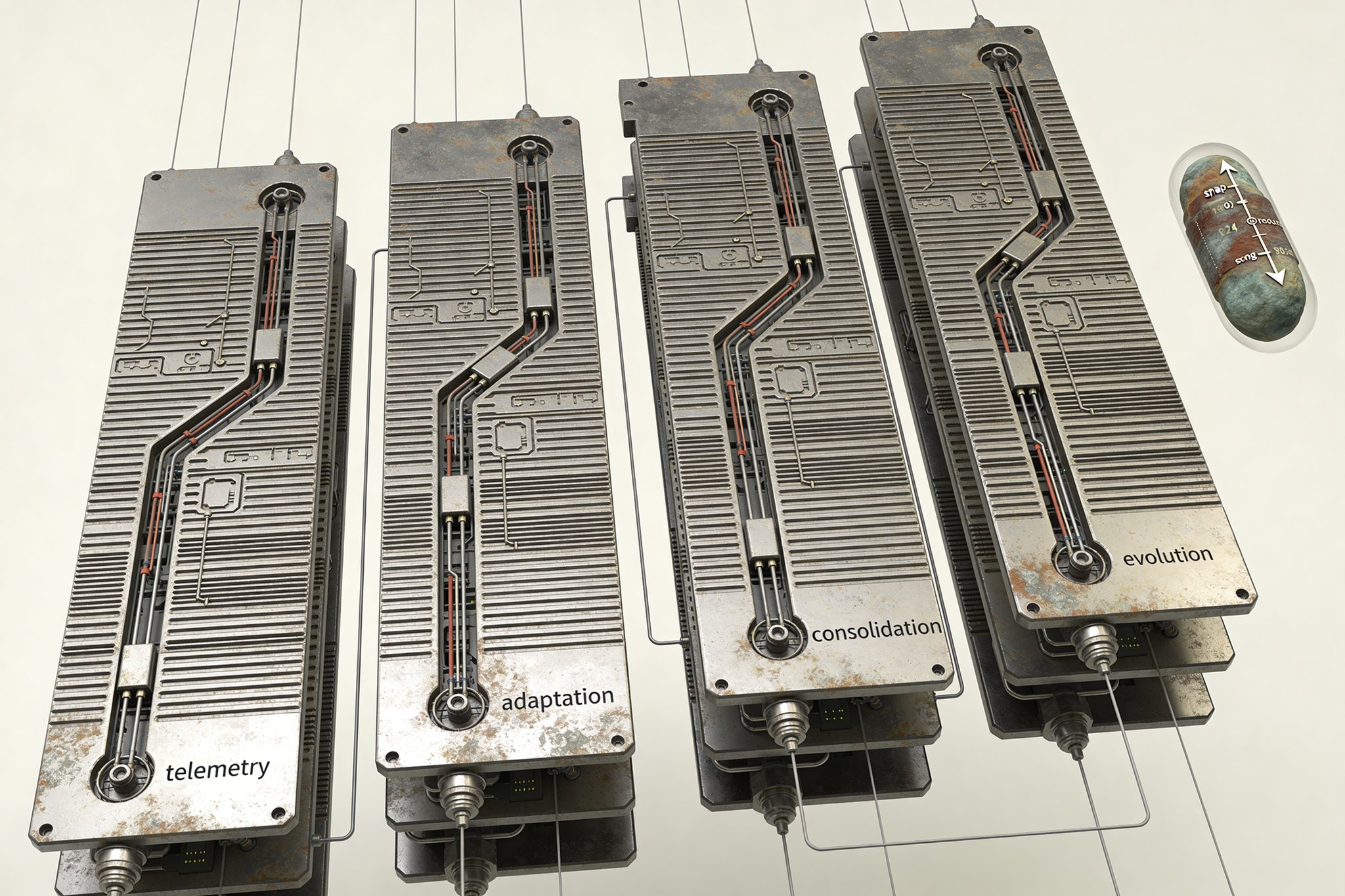

Biology survives continual learning via separation across timescales. The engineering translation is not metaphor; it is an interface contract for what is allowed to move.

- Plasticity (fast): bounded local change. Adapters/LoRA, lightweight heads, router weights, contextual bandits, short-horizon constrained RL on measurable rewards.

- Consolidation (slow): promote what stays valuable. Distillation, replay, offline evaluation, promotion only through gates.

- Metaplasticity (rule selection): decide when learning is allowed. Trigger policies, trust weighting, plasticity budgets, freeze conditions.

- Evolution (slowest): structural variation under multi-objective selection. Neuroevolution over module topology and routing policy with safe mutations.

Separation of concerns is enforced: gradients tune parameters inside bounded modules; evolution proposes structure and routing constraints that define the allowable change space.

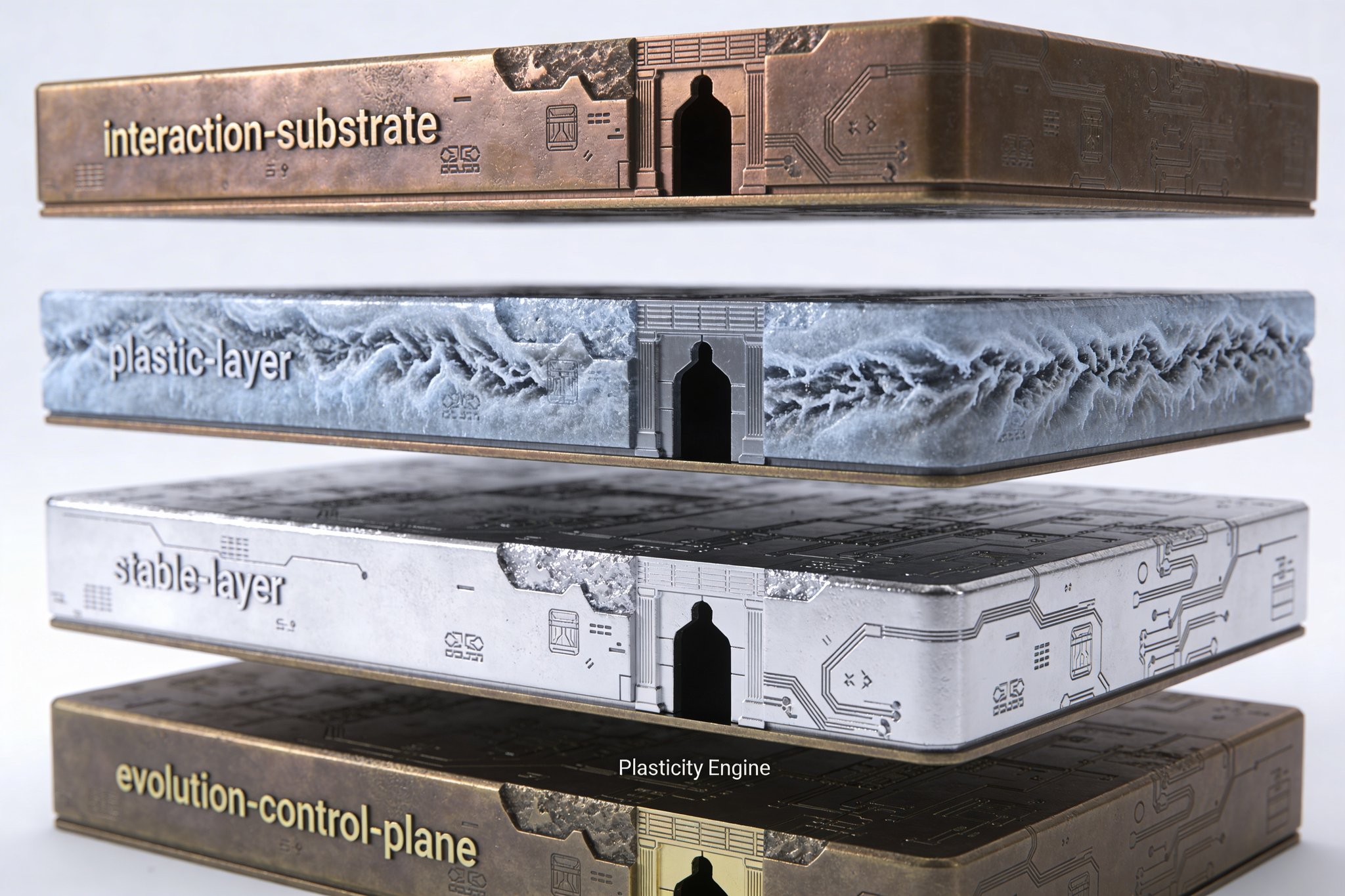

System architecture (execution-grade)

Plasticity Engine is a three-timescale learning stack plus a governance substrate. The bias is modularity: skills, memory, and routing are separable components with independent update cadences and rollback boundaries.

A) Interaction substrate (telemetry + feedback capture)

The substrate exists to make learning attributable and evaluable, not merely data-rich.

- Explicit feedback: accept/reject, edits, preference pairs, “use this output” confirmations, structured issue tags.

- Implicit feedback: retries, abandonment, correction rate, tool switching, undo actions, time-to-first-success.

- Counterfactual logging: record candidate sets vs. shown action with propensities to support unbiased evaluation and safer bandit updates.

- Provenance binding: model version, prompt/context hash, toolchain version, router policy hash, and config snapshot pointers.

Output is a signed event stream designed for replay, audit, and red-team forensics, with domain-dependent consent, retention, and deletion semantics.

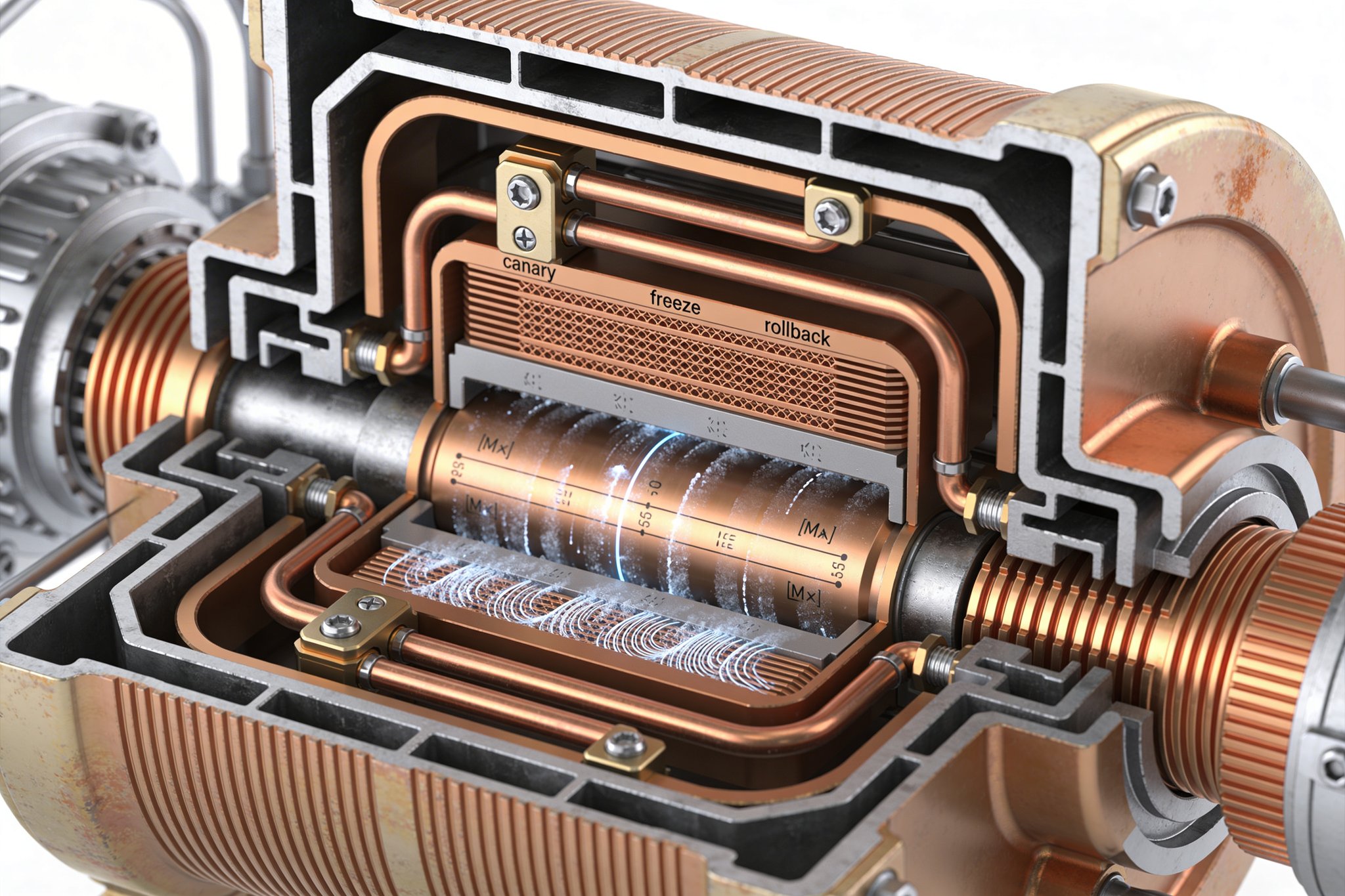

B) Plastic layer (fast adaptation, bounded)

Fast learning is allowed only inside strict envelopes; everything else is frozen.

- Parameter scope: adapters/LoRA, small heads, router weights; no full-model weight updates in the MVP.

- Decision surfaces: contextual bandits for routing and ranking where exploration is bounded and regret is measurable.

- Short-horizon RL (optional): only on constrained rewards (tool success, latency, correction rate) and only behind shadow/canary gates.

- Plasticity budgets: per user/task/channel caps on update magnitude and frequency; separate learning rates for explicit vs. implicit channels.

The goal is not global improvement. The goal is localized, reversible lift on feedback-dense surfaces with controlled failure modes.

C) Stable layer (slow consolidation)

The stable layer is the product’s legible baseline. It changes slowly and only via governed promotion.

- Periodic consolidation: promote only after replay and non-regression gates pass; distill adapter gains when warranted.

- Replay discipline: episodic buffers preserve rare critical behaviors; balance recent data with “do-not-forget” exemplars.

- Memory systems: retrieval-augmented episodic store with TTL, quotas, and explicit forgetting policies tied to privacy constraints.

- Non-regression as default: candidate updates are blocked if they violate invariants even if headline metrics improve.

“Lifelong learning” becomes defensible only when consolidation is more strict than optimization.

D) Evolutionary control plane (slowest)

Neuroevolution is treated as a control plane for structure, not a brute-force optimizer.

- Evolve: module topology, router policy classes, optimizer schedules, exploration parameters, memory budgets.

- Selection: multi-objective fitness across utility, safety violations, calibration, compute cost, latency, and stability.

- Safe mutations: bounded deltas only; no un-audited pathways; capacity expansion requires explicit budget approval.

- Triggers: run on plateau detection, drift alarms, or scheduled audits; not continuously.

Economic plausibility comes from small search spaces, explicit triggers, and hard cost ceilings.

Safety + governance (non-negotiable primitives)

Learning is a production change. Every change must be inspectable, replayable, and reversible at the artifact level.

MVP invariants (router/ranker surface)

These are blockers. They define “stable” operationally.

- Unsafe tool calls: zero tolerance (hard block; must remain 0).

- Anchor fingerprint drift: similarity on K anchor cases must remain above a threshold (e.g., cosine ≥ 0.98 on embeddings of router decisions and key outputs).

- Latency: p95 ≤ baseline + 10% and absolute p95 ≤ a fixed budget (product-specific).

- Calibration: ECE ≤ baseline + 0.01 on monitored decision confidences (or abstention rates within bounds if ECE is not defined).

- User correction rate: must not increase by ≥ 2% absolute week-over-week in canary.

- Exploration ceiling: max exploration probability ≤ a fixed cap (e.g., 1–5%) with immediate freeze on anomaly triggers.

Thresholds are domain-specific but must be numeric and enforced automatically.

Update gating and rollout

- Shadow mode: candidates run in parallel without affecting users; collect counterfactual outcomes.

- Canary deployment: limited exposure with hard stops tied to invariants and regression metrics.

- Evaluation gates: offline replay, anchor fingerprints, and red-team suites must pass before promotion.

- Freeze conditions: if drift/anomaly thresholds trigger, learning halts and the system reverts to a stable baseline.

Drift detection and behavioral fingerprints

Drift is defined as measurable movement in behavior, not metric wobble.

- Embedding distribution shift: detect changes in query/user/task embeddings suggesting environment movement.

- Reward channel health: monitor feedback sparsity, polarity flips, adversarial spikes, and identity churn.

- Behavioral fingerprints: anchor cases spanning safety, core competence, and “do-not-break” workflows; track deltas over time.

- Calibration monitoring: confidence/abstention behavior must remain within bounds under adaptation.

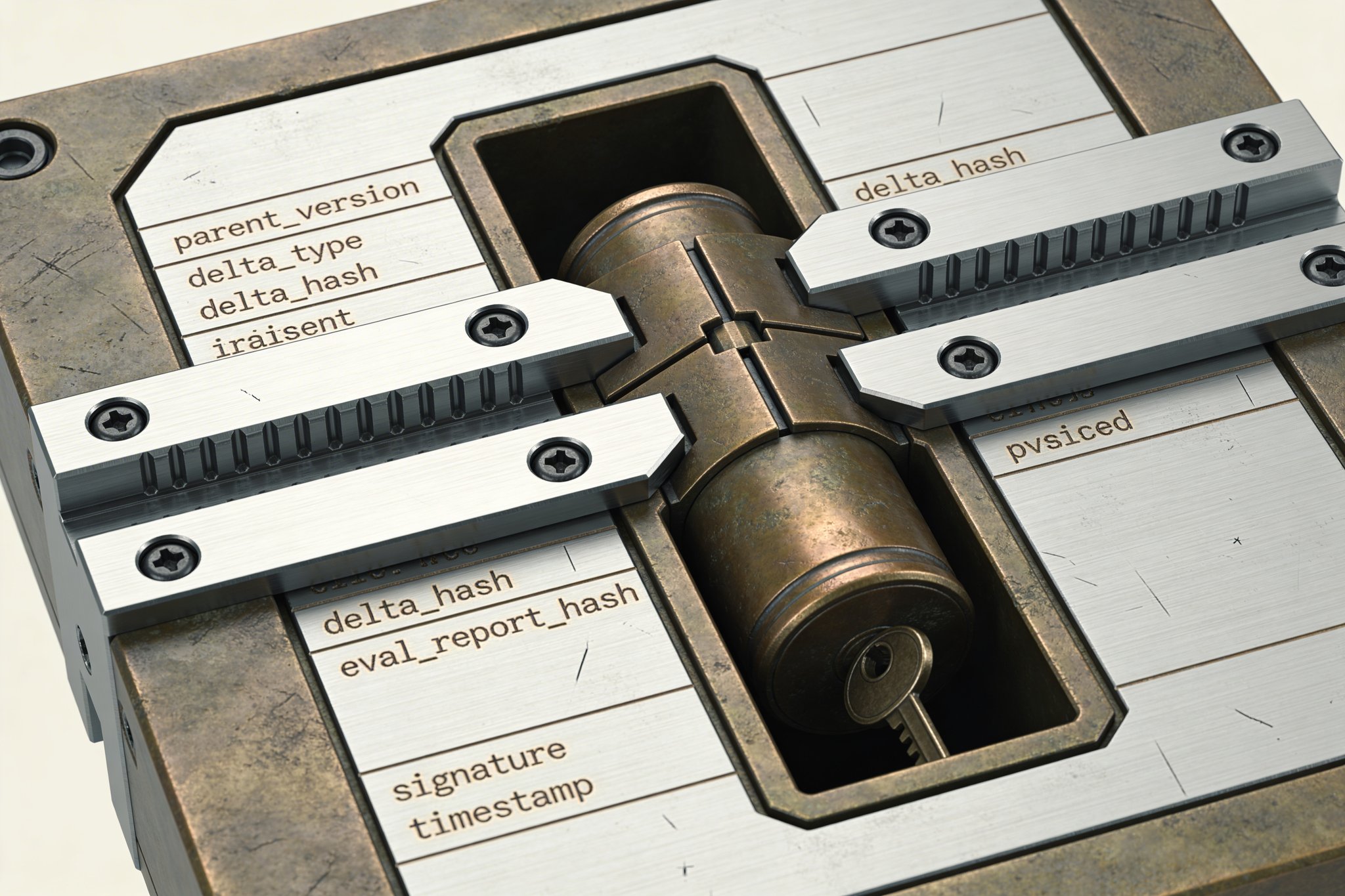

Rollback + lineage (reversibility as a feature)

Reversibility is concrete: it is the ability to return to a known artifact and reproduce why it was chosen.

- What is reverted: adapters/heads, router parameters, bandit posteriors, configuration, and memory writes within a defined window (via event-sourced or snapshot-based memory).

- What is not reverted: user trust and downstream real-world consequences; blast radius is bounded via canaries, exploration caps, and strict freezes.

- Immutable artifacts: every update produces a signed artifact containing the minimal replay set.

Signed update artifact (minimum fields)

{parent_version, delta_type(adapter/router/bandit/memory/config), delta_hash, eval_report_hash, data_snapshot_refs, policy_config_hash, rollout_scope, rollback_target, reviewer_signature, timestamp}

Attack resistance (feedback integrity)

- Trust weighting: feedback influence depends on identity confidence, history, and anomaly scores.

- Rate limits: prevent flooding and coordinated manipulation.

- Anomaly detection: detect reward hacking patterns and sudden preference inversions.

- Channel separation: explicit vs. implicit signals have separate caps and learning rates; implicit never overrides safety invariants.

What’s novel (falsifiable delta)

Continual learning literature centers the stability–plasticity dilemma: preserve learned competence while adapting online. Plasticity Engine’s delta is treating adaptation as auditable change management, not just an optimizer choice: every behavioral change is attributable to a signed artifact, evaluated against anchor fingerprints, and reversible via bounded modules.

Claim (router/ranker MVP): with counterfactual bandits + bounded adapters + freeze/rollback governance, sustain ≥ N% lift on a drifting router/ranker over ≥ 4 weeks while keeping anchor-set regressions ≤ M% and maintaining invariant compliance at 100% in canary.

Secondary gaps addressed as engineering research:

- Delayed credit: reconcile long-lag outcomes (retention, satisfaction) with short-horizon optimization without destabilizing policies.

- Evolve vs. gradient triggers: learn when structure/routing changes are justified vs. parameter tuning.

- Plasticity budgets as a resource: allocate adaptation capacity per user/task/channel to prevent overfitting and capture.

MVP path (de-risking plan)

The MVP must live on a narrow surface where feedback is dense and failure is containable.

Candidate MVP surfaces

- Tool router for creative workflows: select generator/toolchain steps (reference selection → model choice → refinement pass) optimizing success rate and time-to-result.

- Personalized ranking of assets/references: reorder candidates based on accept/use signals, minimizing abandonment and correction.

Phased build

- Phase 1: Logging + bandit harness (no weight updates)

- Instrument signed events, counterfactual logging, and offline replay.

- Deploy routing/ranking with controlled exploration and invariant gates.

- Phase 2: Adapter-based plasticity behind gates

- Introduce bounded adapter updates; enforce budgets and freeze conditions.

- Validate rollback and reproducibility under incident drills.

- Phase 3: Evolutionary search over router/module graph

- Constrain mutations and compute budgets; run only on plateau/drift triggers.

- Evaluate multi-objective fitness including cost and stability.

Success criteria (operational, not rhetorical)

- Sustained lift over multiple weeks under non-stationary usage.

- Anchor fingerprints remain stable within thresholds.

- Rollback works in practice: revert restores prior behavior and metrics within defined bounds.

- Manipulated or low-trust feedback fails to steer policies beyond exploration caps.

Interfaces and deliverables (Atlas-ready)

- System diagram: event flow, learning loops, artifact store, evaluation harness, deployment gates.

- Contracts: feedback API schema, signed update artifact schema, provenance + signing requirements.

- Risk register: reward hacking, bias amplification, drift, privacy leakage, replay poisoning, evaluation blind spots; each with mitigations and owners.

- Benchmark protocol: define “lifelong” via multi-week replay with shifting distributions plus invariant enforcement.

- Reference implementation skeleton: modular services (telemetry, trainer, evaluator, artifact registry, router) designed for ArX orchestration.

Generation Prompts

Image Prompt

A0 minimalist technical diagram poster (3:4), monochrome black/gray on white, IBM Plex Sans typography. Four stacked layers labeled Interaction Substrate, Plastic Layer, Stable Layer, Evolution Control Plane. Thin grid, precise arrows for shadow mode, canary gates, freeze, rollback. Callouts: “signed update artifact” fields and “anchor fingerprints” thresholds. No gradients, crisp vector lines.

Video Prompt

Crisp monochrome vector motion-graphics, no depth-of-field, 12–15 seconds at 24fps. Three acts: (1) telemetry events stream into a ledger with propensities, (2) a canary gate opens as a signed artifact stamps “approved,” (3) a drift alarm triggers freeze and a rollback rewinds to a prior version. Minimal typography, precise easing, high-end research aesthetic.

3D Model Prompt

Desktop manufacturable sculpture ~200mm tall: four snap-fit stacked slabs (3mm minimum wall) labeled substrate/plastic/stable/evolution, frosted acrylic with brushed aluminum connectors. Routed conduits and small gate levers indicate canary/freeze/rollback. Include detachable module blocks and a removable aluminum “artifact capsule” cylinder with a keyed lid and engraved lineage arrows filled with black epoxy.

Constraints & Non-Goals

- —All updates must be attributable, testable, and revertible; prohibit unlogged weight, memory, or architecture changes.

- —Learning is modular by default (skills, memory, routing) with explicit blast-radius boundaries and independent cadences.

- —Plasticity budget is explicit: bounded update magnitude and frequency per user/task/channel; anchor-set regression and latency deltas have hard ceilings.

- —No unbounded memory writes: episodic stores enforce TTL, per-user quotas, and deletion guarantees; update artifacts are signed and lineage-tracked with provenance.

Feasibility Gradient

Bounded adaptation is feasible today on narrow decision surfaces using contextual bandits, preference learning, and small parameter deltas (adapters/heads), backed by replay and strict offline evaluation. What remains hard is product-grade online evaluation under non-stationary users, integrity of feedback under manipulation, and guaranteeing non-regression beyond a curated anchor set. Neuroevolution is plausible as a slow outer loop only when constrained to small search spaces and invoked on plateaus or drift alarms; an MVP should exclude open-ended policy learning and focus on reversible router/ranker updates with canary, shadow-mode, and deterministic rollback.

Next Actions

- Lock an MVP surface (router or ranker) and publish a measurable invariant set with pass/fail thresholds and rollback targets.

- Implement the interaction substrate: signed event schema, counterfactual logging (propensities), consent/retention controls, and trust-weighted feedback API.

- Build the evaluation harness: anchor fingerprints, regression battery, drift detectors, shadow pipeline, and signed update artifact spec (minimum fields).

- Ship Phase 1 bandit router/ranker (no weight updates), then Phase 2 bounded adapters behind canary gates; demonstrate ≥4-week sustained lift with bounded regressions.

Interactive 3D Model

Restricted Layer

Restricted materials include module graph blueprints and service boundaries, adapter placement and consolidation strategies, replay/memory schemas with privacy semantics, safe-mutation operators and constrained search spaces for neuroevolution, full non-regression and red-team batteries (behavioral fingerprint suites, reward-channel integrity tests), governance playbooks (signing, provenance ledger, rollout/incident response), trigger policies for when learning is permitted, and a reference implementation skeleton aligned with ArX orchestration contracts.

Request accessLast updated: February 24, 2026