ReasonNet Trainer

A trainer network converts observations into testable structure artifacts, validates them via consistency/prediction/intervention gates, then emits bounded training actions so improvements are traceable to falsifiable intermediate claims rather than opaque gradient dynamics.

ReasonNet Trainer treats parameter updates as a decision process guided by explicit hypotheses about structure, not as an opaque byproduct of differentiating a loss through a computation graph.

Backprop remains an effective local credit assignment rule, but it is semantics-agnostic: it optimizes whatever the loss measures, whether or not that measurement aligns with the intended invariants of the domain. In underspecified settings, scaling gradients often becomes scaling brute force.

ReasonNet proposes a different center of gravity: a dedicated trainer network that (1) observes, (2) proposes structure, (3) verifies it under falsification gates, and only then (4) actuates learning through bounded, auditable update actions.

Prior Art / Positioning

Learned optimizers already exist: Andrychowicz et al. (2016) demonstrated an LSTM trained to output parameter updates that can outperform hand-designed optimizers on the task distribution it is meta-trained on. ReasonNet does not claim “learning-to-optimize is new.”

The delta is architectural and procedural:

- Structure artifacts are first-class objects (graphs, invariants, programs) rather than latent states inside an optimizer RNN.

- Actuation is gated by falsification tests (consistency/prediction/intervention), not by raw reward alone.

- Bounded actuation is enforced by design (envelopes over where/how much the trainer can modify learning), making the trainer more like a constrained control policy than a free-form updater.

Core Architecture: Student + Reasoning Trainer

Student (S) is the model we ultimately care about: a VLM, a policy network, a multimodal encoder-decoder—any system whose behavior we want to improve.

Trainer (T / ReasonNet) is not a labeler and not a standard optimizer. It is a controller that consumes:

- Raw samples (images, text, trajectories)

- Student outputs and failures (mistakes, uncertainty, calibration)

- Limited internal signals (selected activations / embeddings / probe heads; explicit cap on bandwidth and taps)

and produces three classes of outputs:

- Structure artifacts: scene graphs, object–relation triples, temporal segments, invariants, causal hypotheses, skill graphs.

- Training artifacts: pseudo-labels, counterexamples, curricula, loss components, evaluation probes.

- Update actions: bounded proposals that change how S learns (usually not direct full-weight edits).

The design intent is a legible loop: a chain of claims that can be tested and ablated, rather than a single scalar loss whose gradients carry all meaning.

Measurable Structure: Earned Claims, Not Narration

A core failure mode in modern reasoning systems is plausible narration: outputs that read coherently but do not causally predict improvement. ReasonNet treats reasoning as a draft, then forces distillation into artifacts that can be falsified.

A structure artifact is accepted only if it passes at least one gate:

- Consistency gate: stability under paraphrase/augmentation/viewpoint shifts (artifact-level agreement metrics, not just textual similarity).

- Predictive gate: conditioning on the artifact improves prediction/compression (e.g., lower NLL, tighter calibrated uncertainty, better next-token or next-state prediction).

- Intervention gate: editing the artifact causes student behavior to change in the predicted direction (counterfactual prompts, relation swaps, controlled scene edits).

Artifacts that cannot earn a gate score are logged but cannot trigger actuation.

Actuation Envelopes (What “Bounded” Means)

ReasonNet’s agency is intentionally limited; the point is to remove degrees of freedom that enable reward hacking and training collapse.

Example envelope (to be set per benchmark):

- Frequency: at most M trainer actions per N student steps.

- Surface area: at most K layers touched; no access to the full parameter tensor by default.

- Magnitude: per-episode update-norm budget ε (hard clip + accounting).

- Parameterization: low-rank adapters only (rank ≤ r) for Tier B; direct deltas only in Tier C with stricter ε and rollback.

The harness rejects out-of-envelope actions and emits a trace for audit.

Update Pathways (Three Tiers)

This project is staged to avoid betting everything on a full replacement of backprop.

Tier A — Reasoned training control (practical).

T proposes curricula, counterexamples, and loss shaping; S trains with a standard optimizer. The question is: do verified structure artifacts improve sample efficiency and OOD robustness even when weight updates are conventional?

Tier B — Constrained hybrid updates (near-term frontier).

T emits bounded actions such as:

- Layerwise learning-rate gates and schedule changes

- Gradient modifiers (clipping schedules, per-layer scaling)

- Low-rank adapter deltas (LoRA-style patches) under ε/r/K/M/N envelopes

This tier makes “reason-to-update” literal while keeping changes reversible and attributable.

Tier C — Direct update policy (research-grade).

T outputs direct parameter deltas or optimizer states with minimal gradient reliance. This tier is only justified if Tier B yields consistent, stable gains across seeds and task variations.

Training the Trainer Without Circularity

Training T is meta-learning: the trainer is judged by the improvement it induces in S under fixed budgets.

A viable protocol is episodic evaluation:

- Snapshot S (or initialize from a fixed checkpoint).

- Run N-step training episodes where T proposes artifacts and (if gated) actions.

- Evaluate S on held-out metrics (generalization, OOD, calibration, robustness).

- Update T to maximize improvement, penalizing instability and non-generalizable shortcuts.

Traceability is enforced with action gating: T may only actuate when it produces an artifact whose verification score exceeds a threshold τ and whose predicted gain (from a calibrated regressor/critic trained on prior episodes) matches observed gains within tolerance δ on replayed episodes. Otherwise, T is forced into observe-only mode.

Canonical Wedge Benchmark (First Demo Spec)

To prevent narrative drift, the first demonstration is intentionally narrow:

- Task: compositional VQA with synthetic scenes and ground-truth scene graphs (structure artifacts are unambiguous and cheaply verifiable).

- OOD split: novel combinations of attributes/relations not present in training (compositional generalization).

- Baselines: tuned standard training (AdamW + schedule), plus Tier A equivalents without structure gating (same capacity, same compute).

- Budget template: fixed model size, fixed steps, fixed tokens/images, fixed seeds (report mean ± CI).

Success criteria are comparative and falsifiable:

- OOD improvement at equal compute, and/or equal performance at lower compute.

- Ablations show gains collapse when gates are removed or artifacts are randomized.

- Stability improves (lower collapse rate, fewer spikes, tighter calibration), not just mean accuracy.

Evaluation: What Counts as “Better”

ReasonNet is not validated by cleverness. It is validated by hard comparisons under fixed constraints.

Primary metrics

- Equal performance at lower data/compute (steps, tokens, images)

- Higher OOD and compositional performance under a defined split

- Training stability: fewer collapses, less variance across seeds, improved calibration

Secondary metrics

- Artifact quality: agreement with ground truth when available; otherwise predictive/intervention strength

- Reproducibility across task variants in the same family

- Auditability: artifact logs + gate scores explain when and why actions occurred

Success is not “it reasons.” Success is “verified structure artifacts causally and reproducibly improve learning efficiency or robustness under bounded actuation.”

Risk Surface and Controls

Rationalization risk. T produces persuasive stories that do not correspond to causal levers.

Control: gating + ablation-first reporting; narration without gate wins cannot actuate.

Goodharting risk. T optimizes short-horizon metrics at the expense of long-horizon capability.

Control: multi-horizon evaluation, delayed metrics, and OOD splits designed to punish shortcuts.

Metric manipulation. A high-agency trainer can learn to game the evaluator.

Control: envelope enforcement, frozen evaluator protocols, red-team tasks, and randomized audits.

Non-stationary collapse. Co-adaptation between T and S destabilizes training.

Control: conservative schedules, replay buffers of episodes, rollback on instability signals, and explicit stability penalties.

Why This Pattern Matters (If It Works)

Backprop-centric training collapses learning into a scalar objective and delegates meaning to gradients. ReasonNet separates learning into two engineered stages:

- Structure stage: convert unstructured evidence into explicit, testable artifacts.

- Control stage: apply bounded training actions only when artifacts earn verification.

If validated, this becomes a reusable training-systems template for messy domains: a falsification-first loop where intermediate artifacts are the unit of accountability, and “learning” becomes a controlled, auditable process rather than an end-to-end numeric ritual.

Generation Prompts

Image Prompt

Minimalist A2 technical poster, white background, black 0.5pt linework: two labeled blocks “Student S” and “Trainer T (ReasonNet)” with arrows to three gate icons labeled “Consistency,” “Predictive,” “Intervention,” then to a bounded-action box “ε/r/K/M/N envelope.” Add two small insets: a scene graph and a loss curve. Swiss typography, subtle cyan accents only.

Video Prompt

12-second cinematic macro flythrough: translucent neural lattice (Student) with a ghost network overlay (Trainer). The trainer emits crisp cyan graph primitives that approach three luminous gates (Consistency, Predictive, Intervention); only passing graphs continue to a control panel showing ε/r/K/M/N budgets, then produce small localized pulses that gently modify the lattice. Cool monochrome palette, shallow DOF, clean HUD text, no clutter.

3D Model Prompt

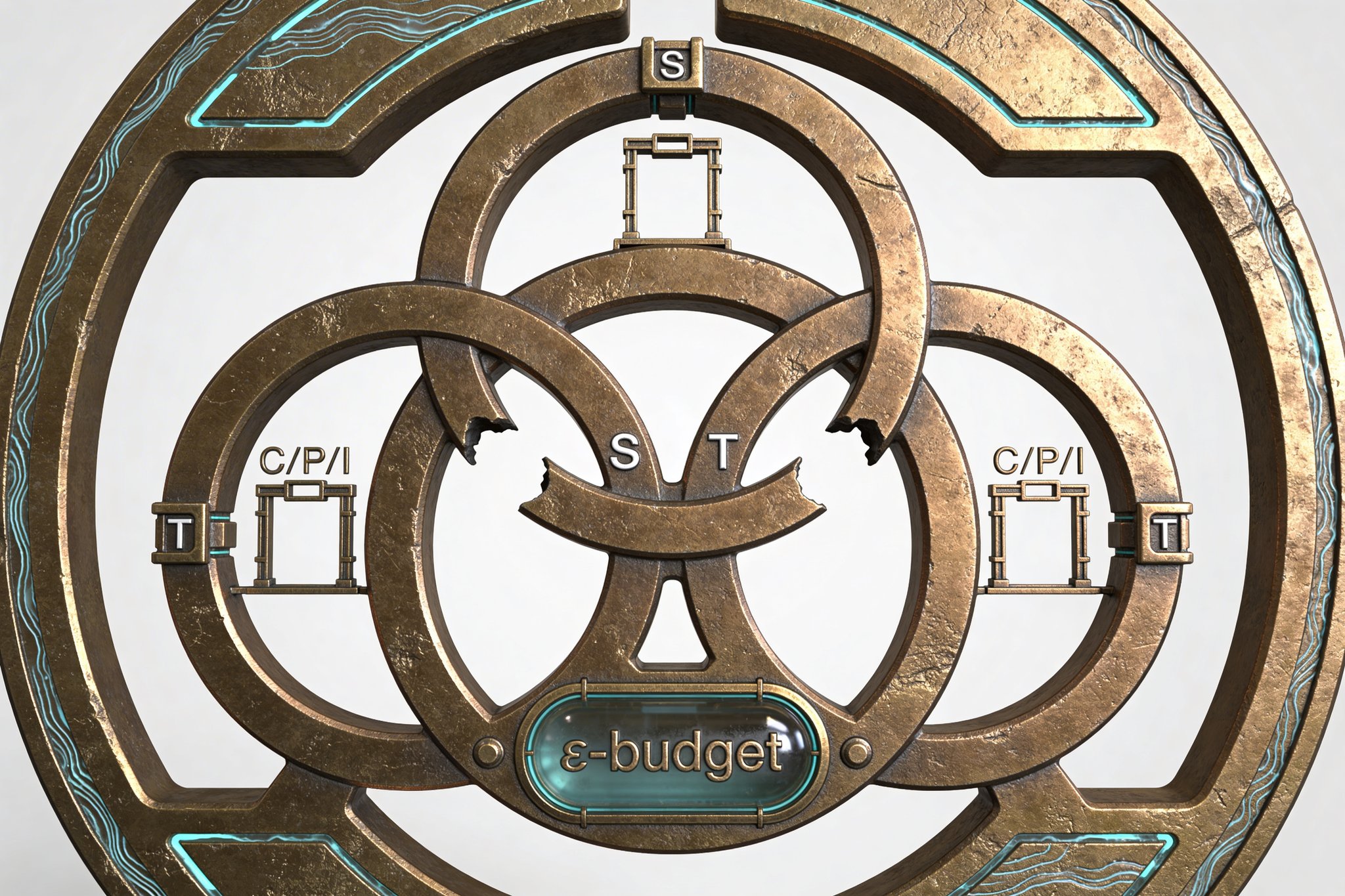

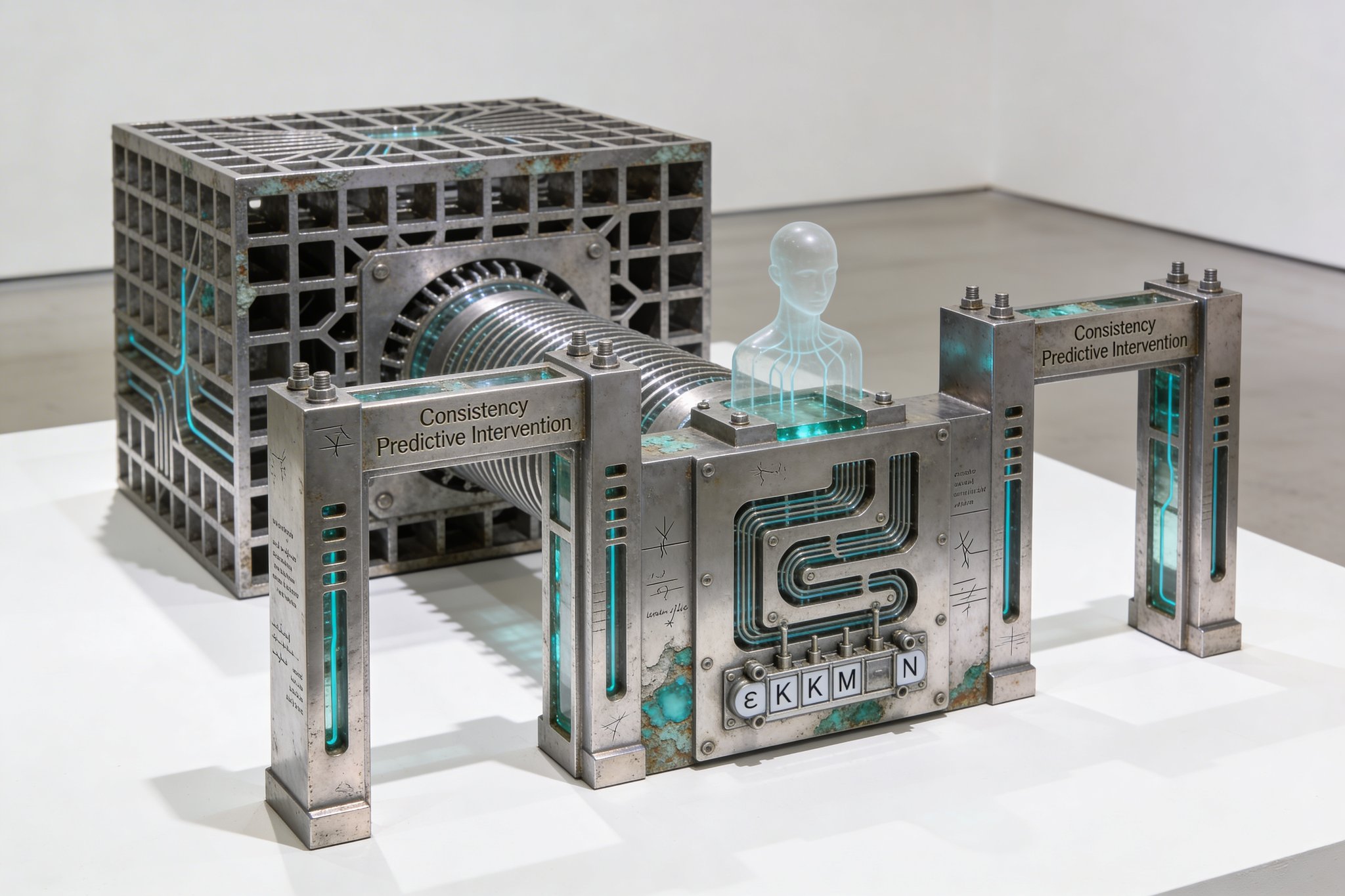

Manifold modular emblem for close-up rendering: two interlocking rings engraved “S” and “T,” connected by three rectangular gate frames labeled C/P/I, with thin conduits leading to a small capped reservoir labeled “ε budget.” Matte ceramic white primary surfaces, brushed aluminum edges, translucent cyan inserts for accepted structure. Separate parts for rings, gates, conduits, and reservoir; clean fillets, precise bevel widths, watertight geometry.

Constraints & Non-Goals

- —Gradients may remain as a substrate, but outer-loop training control must be learned and justified by verified structure artifacts.

- —All proposed structure must be measurable: it must pass consistency, predictive, or intervention gates and survive automatic ablations.

- —All demonstrations must be reproducible on public datasets with fixed compute budgets and strong tuned baselines.

- —Trainer actuation is explicitly budgeted: per-episode delta-norm ≤ ε, adapter rank ≤ r, max K layers touched, and max M actuation events per N student steps; out-of-envelope actions are rejected.

Feasibility Gradient

Hybrid ReasonNet implementations are feasible now: a VLM/LLM can propose candidate structure artifacts, a verification harness can falsify them, and bounded actuation can be limited to curriculum, loss shaping, and low-rank adapter deltas; this is aligned with the learned-optimizer literature (e.g., Andrychowicz et al. 2016-style update policies) but adds an auditable structure layer and gating that should reduce rationalized-but-false updates. Full end-to-end replacement of backprop at scale remains unlikely near-term; the critical risks are structure overfitting, Goodharting on short-horizon metrics, and co-adaptation instability between trainer and student.

Next Actions

- Define a single wedge benchmark: compositional VQA with synthetic scene graphs; fix student architecture/size, optimizer baseline, step budget, and an OOD split (novel attribute/relation compositions).

- Implement the verification harness: artifact schemas, gate metrics (consistency/prediction/intervention), and automatic ablation reporting with seed-averaged confidence intervals.

- Prototype Tier A: trainer outputs curricula + loss terms; evaluate in-split and OOD gains vs tuned baselines under identical compute.

- Prototype Tier B: trainer outputs bounded LoRA deltas + layerwise LR gates; enforce ε/r/K/M/N envelopes and compare stability/collapse rates vs baseline training.

Interactive 3D Model

Restricted Layer

Behind the access wall: student↔trainer interface schematics (activation taps, memory limits, latency budgets), a structure-primitive library (graphs, invariants, temporal programs) with concrete schemas, the falsification-first verification harness implementation, compute-accounted demo recipes, and safety controls (envelope enforcement, evaluator hardening, and red-team suites for metric gaming).

Request accessLast updated: February 23, 2026